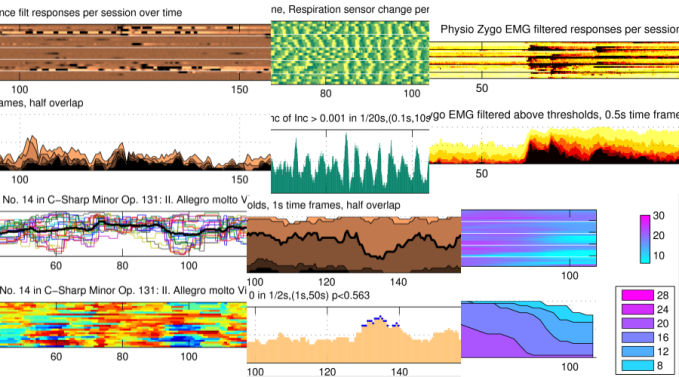

In the Solo Response Project, I recorded my own responses to a couple dozen pieces of music everyday for most of a month, self report and psychophysiological, to generate a data set that would let me compare experiences as captured through these measurement systems. The data set has mostly been used behind the scenes to tune signal processing and statistics, but there is plenty to learn about the music as well, given how I reacted to these stimuli.

On the project website, there is now a complete set of stimulus-wise posts sharing plots of how I responded to these pieces of music as they played and over successive listenings. Each post includes a recording of the stimulus (more or less), and figures about each of:

- Continuous felt emotion ratings,

- facial surface Electromyography (Zygomaticus and Corrugator) and of the upper Trapezius,

- Heart rate and Respiration rate,

- Respiration phases,

- Skin Conductance and Finger Temperature.

The text doesn’t explain much but those familiar with any of these signals will find it interesting to see how a single participant’s responses can vary over time. Some highlights from the amalgam above (left to right, top to bottom):

- The familiar subito fortissimo [100s] and continued thundering in O Fortuna from Carmina Burana is so effective that my skin conductance kept peaking through that final section. (At least on those days when GSR was being picked up at all.)

- Some instances of respiratory phase aligning were unbelievably strong, for example to Theiving Boy by Cleo Laine [85s].

- Evidence that I still can’t help but smile at the way Charles Trenet pronounces the word play in “Boum!” (“flic-flac-flic-flic” [60s])

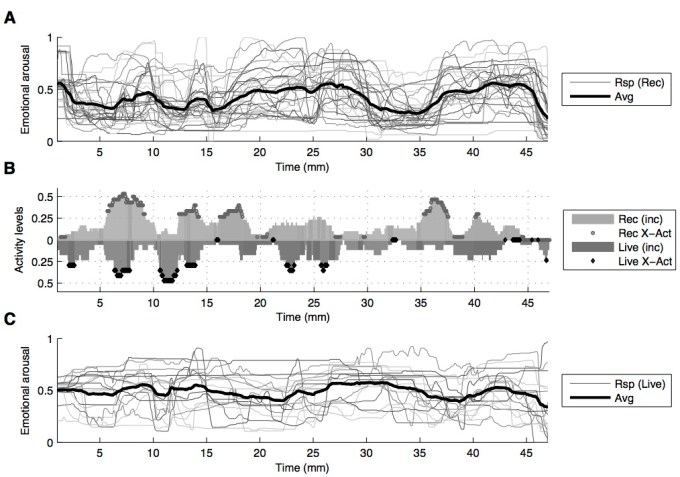

- Self-reported felt emotional responses can change from listening to listening, particularly to complex stimuli like Beethoven’s String Quartet No. 14 in C-sharp minor.

- Finger temperature plunging [130s] with the roaring coda [118s] in the technical death metal piece of Portal by the band Origin

- Respiration getting progressively slower at the end [90s] of a sweet bassoon and harp duet by Debussy called Romance.

There is still a lot to say about the responses to the 25 stimuli used in this project, but as always, anyone is welcome to poke through the posts to look, listen, and consider what might be going on.